Hugging Face and AWS: Streamlining Foundation Model Development and Deployment

Quick Summary

- Hugging Face and AWS are collaborating to provide robust building blocks for training and inferring foundation models, simplifying complex AI development.

- This initiative integrates Hugging Face's popular open-source tools with AWS's scalable infrastructure, empowering developers to build and deploy advanced AI solutions more efficiently.

Hugging Face and AWS Join Forces: Unlocking the Future of Foundation Model Development

The landscape of artificial intelligence is rapidly evolving, with Foundation Models (FMs) like Large Language Models (LLMs) and diffusion models pushing the boundaries of what's possible. However, the journey from concept to deployment for these massive models is often fraught with complexity, demanding significant computational resources, specialized expertise, and optimized tooling. Recognizing this critical challenge, Hugging Face, the driving force behind open-source AI, has fortified its collaboration with Amazon Web Services (AWS) to deliver a comprehensive suite of "building blocks" designed to streamline the training and inference of these powerful models on AWS infrastructure.

The Core Update: Integrated Building Blocks for Advanced AI

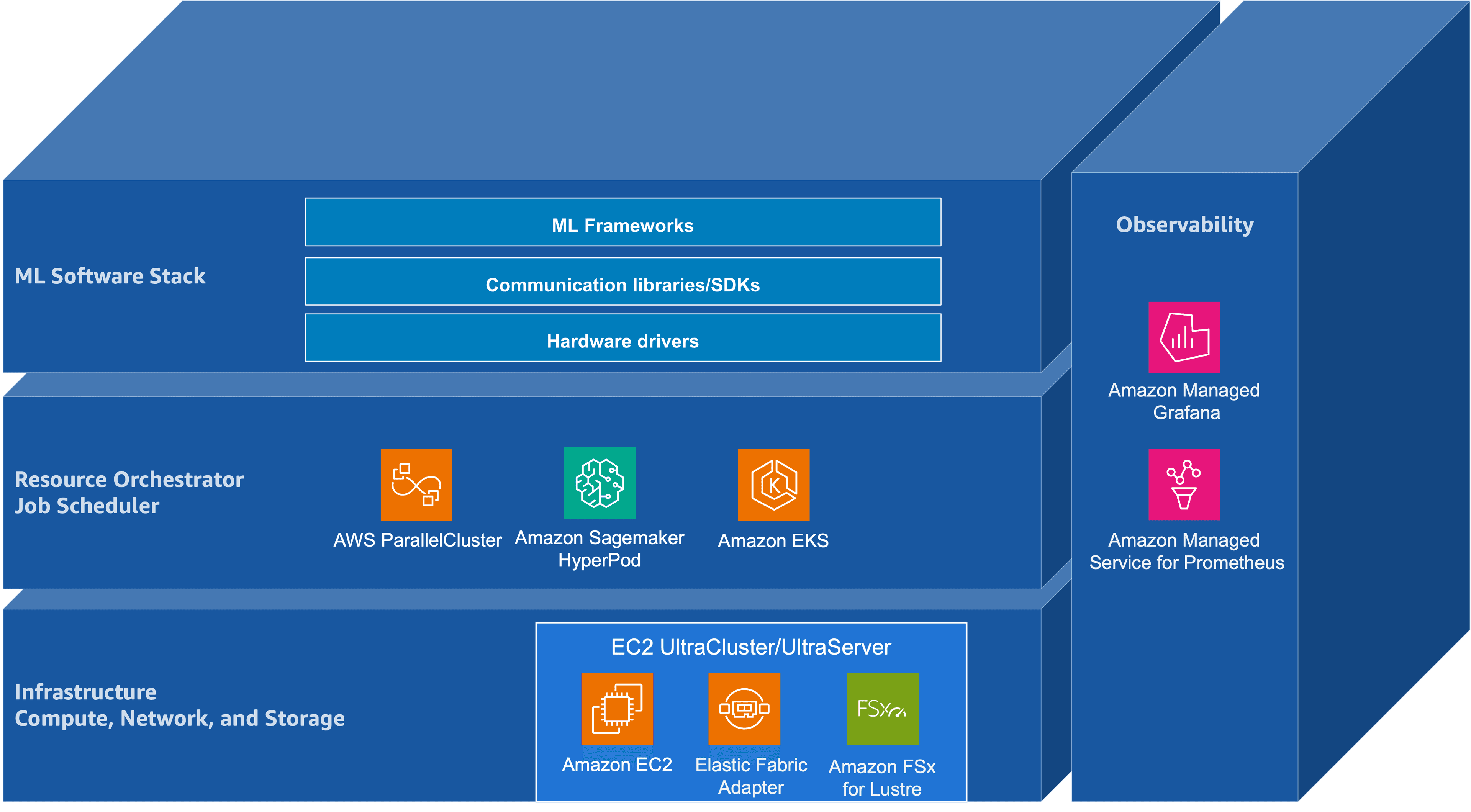

This strategic alignment between Hugging Face and AWS represents a significant leap forward for AI developers and enterprises. The initiative focuses on integrating Hugging Face's widely adopted open-source libraries and tools—such as Transformers, Accelerate, and Optimum—with AWS's robust, scalable, and cost-efficient cloud services. The goal is to demystify and accelerate the entire lifecycle of foundation models, from initial experimentation and large-scale distributed training to high-performance, cost-effective inference serving.

Developers can now leverage optimized software environments, pre-configured containers, and seamless integrations that abstract away much of the underlying infrastructure complexity. This means less time spent on setup and configuration, and more time focused on model development, fine-tuning, and deployment. Whether it's training a custom LLM on massive datasets or deploying a generative AI model for real-time applications, these integrated building blocks provide the necessary foundation.

Key Highlights and Features

The collaborative efforts bring forth several crucial capabilities:

- Optimized Software Stack: Deep integration of Hugging Face's core libraries, including

transformersfor easy access to state-of-the-art models,acceleratefor simplifying distributed training across multiple GPUs, andoptimumfor performance optimizations specific to AWS hardware. - Seamless AWS Integration: Direct compatibility and optimized workflows for AWS services like Amazon SageMaker for end-to-end ML lifecycle management, Amazon EC2 instances (including those with NVIDIA GPUs), and specialized AWS Inferentia and Trainium chips for accelerated inference and training respectively.

- Hardware Acceleration: Full support for AWS's purpose-built machine learning accelerators, Inferentia (for inference) and Trainium (for training), enabling developers to achieve superior performance and lower costs compared to general-purpose hardware.

- Scalable Distributed Training: Tools and configurations that make it easier to perform large-scale distributed training, allowing developers to efficiently train models with billions of parameters across clusters of AWS instances.

- Efficient Inference Serving: Simplified deployment of foundation models for inference using solutions like Hugging Face's Text Generation Inference (TGI), optimized for low-latency, high-throughput serving on AWS, significantly reducing operational overhead.

- Cost-Effectiveness: By leveraging AWS's elastic infrastructure and specialized accelerators alongside Hugging Face's optimization libraries, users can achieve better price-performance for both training and inference workloads.

Why This Matters: Impacting the AI Ecosystem

This enhanced collaboration carries profound implications for the entire AI community:

- Democratization of Advanced AI: By lowering the technical and operational barriers, more developers, researchers, and enterprises—regardless of their cloud infrastructure expertise—can access, train, and deploy sophisticated foundation models.

- Accelerated Innovation: Simplifying the underlying infrastructure allows engineers to focus on model innovation, leading to faster development cycles and quicker iterations on cutting-edge AI applications.

- Optimized Performance and Cost: The deep integration with AWS's specialized hardware ensures that foundation models can run at peak performance while simultaneously managing operational costs more effectively, a crucial factor for large-scale AI projects.

- Enterprise Adoption: Provides a more robust, secure, and supported pathway for enterprises to integrate advanced generative AI capabilities into their products and services, fostering broader industry adoption.

- Strengthening the Open-Source Ecosystem: Reinforces the value of open-source contributions by making Hugging Face's innovative tools even more accessible and performant on a leading cloud platform.

Conclusion: A Future Paved with Accessible AI Innovation

The synergistic efforts between Hugging Face and AWS are not just about providing tools; they're about building a more accessible, efficient, and powerful ecosystem for foundation model development. By abstracting away much of the complexity inherent in large-scale AI, they empower a broader range of innovators to harness the transformative potential of models like LLMs.

As foundation models continue to grow in size and capability, the demand for streamlined infrastructure and optimized workflows will only intensify. This collaboration sets a strong precedent, promising a future where cutting-edge AI is not just a domain for a select few, but a powerful asset available to every developer and organization striving to build the next generation of intelligent applications. The road ahead points towards even more integrated solutions, further pushing the boundaries of what's possible with AI on the cloud.

Related AI Tools

Related Workflows

AI YouTube Shorts Workflow

AI YouTube Shorts Workflow is a practical AI workflow designed to help creators, businesses, marketers, and developers automate repetitive tasks and improve productivity using modern AI tools. This workflow explains exactly which tools to use, how they connect together, and the step-by-step process required to achieve high-quality results faster.

AI Instagram Reel Workflow

AI Instagram Reel Workflow is a practical AI workflow designed to help creators, businesses, marketers, and developers automate repetitive tasks and improve productivity using modern AI tools. This workflow explains exactly which tools to use, how they connect together, and the step-by-step process required to achieve high-quality results faster.